How do you show AI thinking to someone who didn't sign up for AI — and still have them trust it?

Problem statement

The brief came from a business development need: how do you assess a company's AI readiness within a legal context before you've even started working with them? The starting points varied wildly. Some organisations still run their contract management lifecycle with physical filing cabinets; others have a full CLM system in place; some are agentic-first. Each of them needs a completely different conversation. The survey was the discovery tool that made that conversation possible.

My role

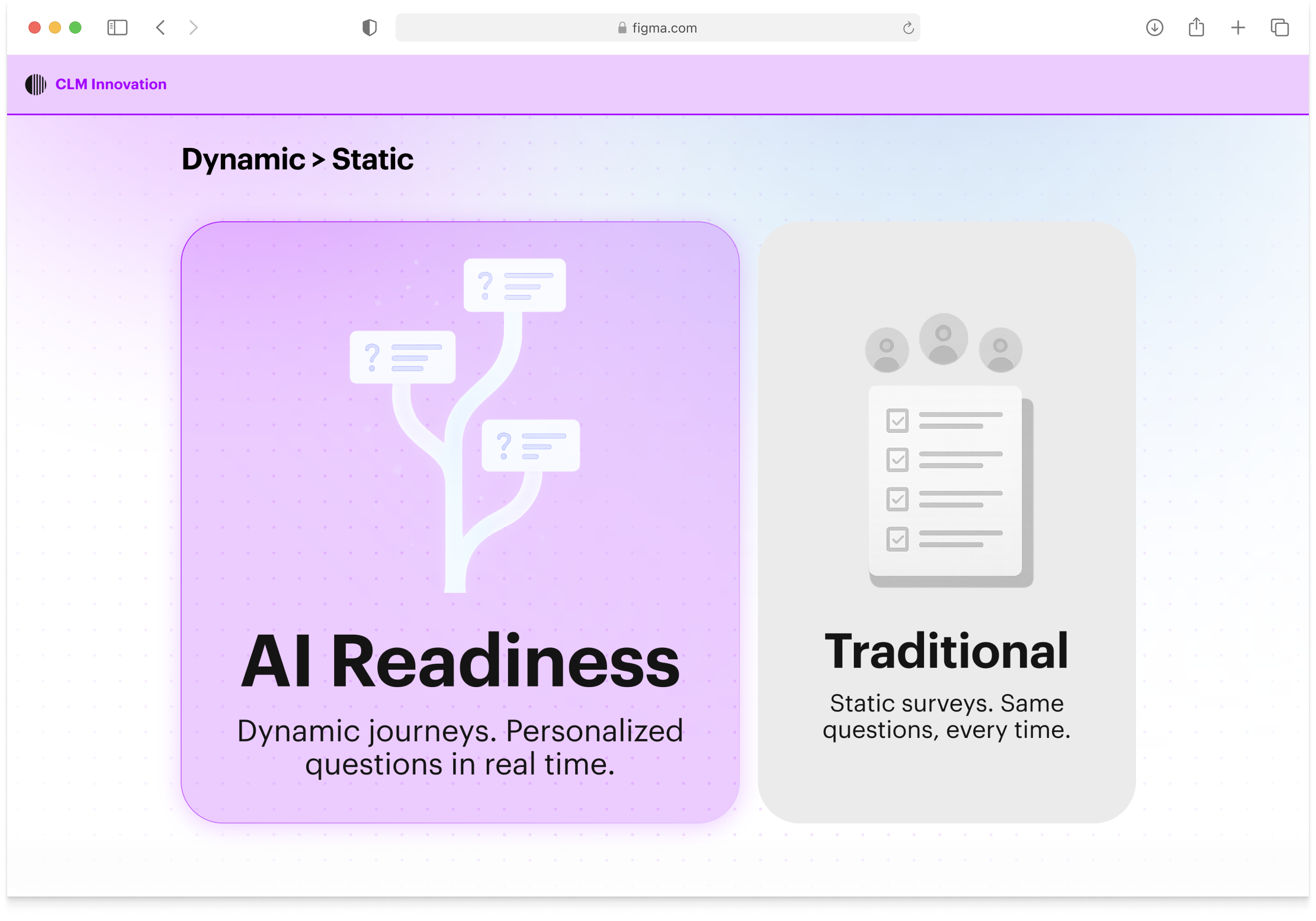

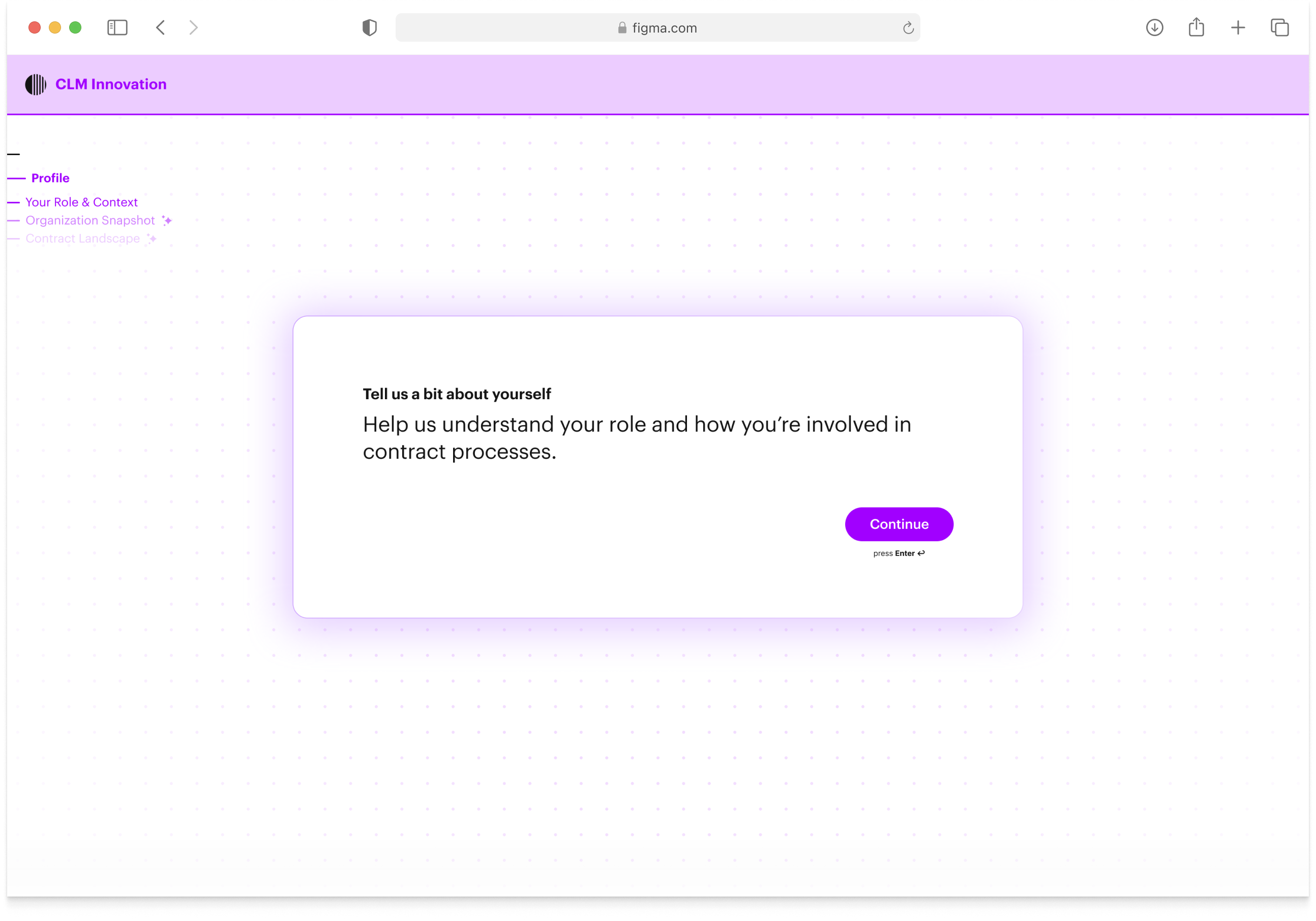

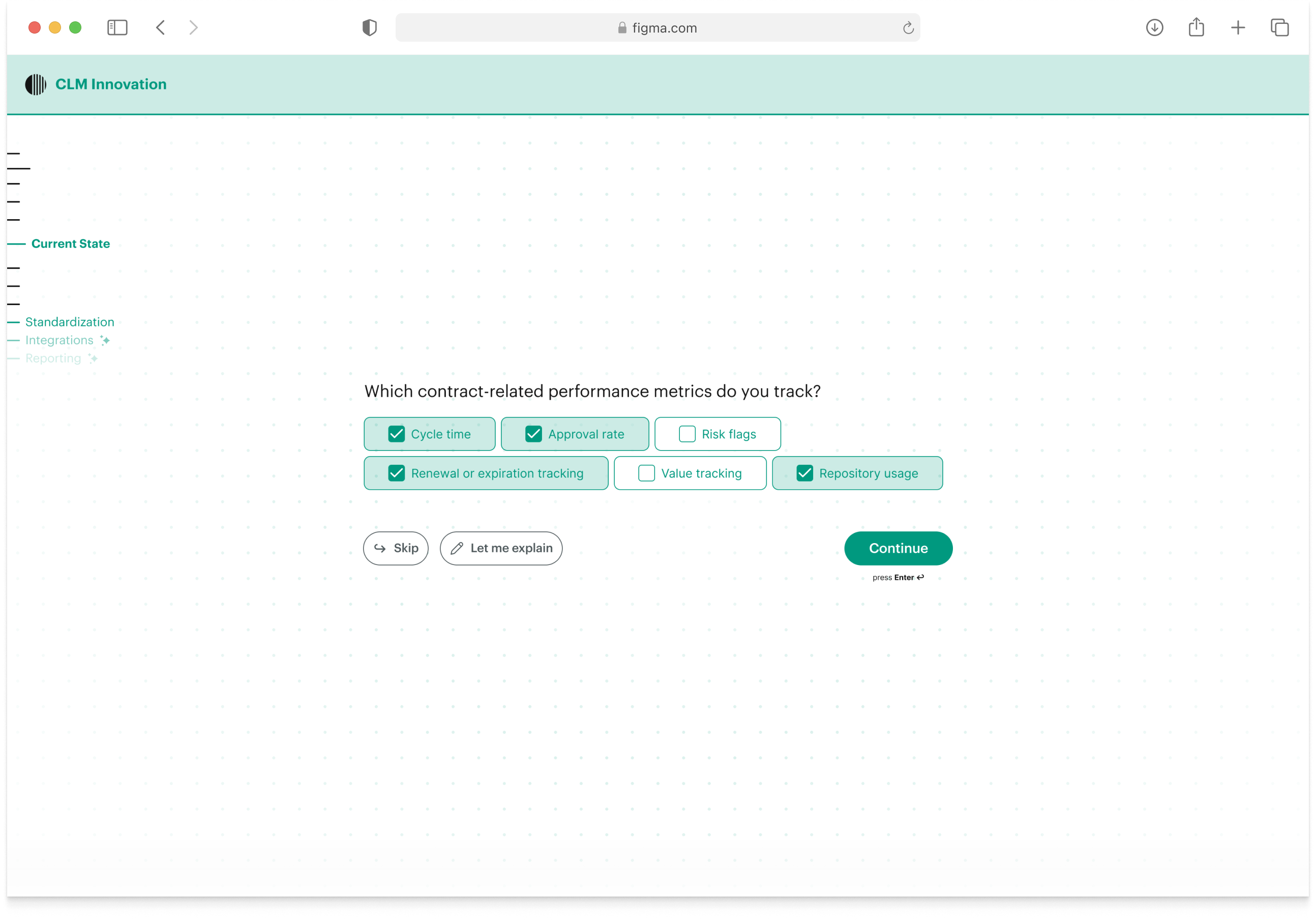

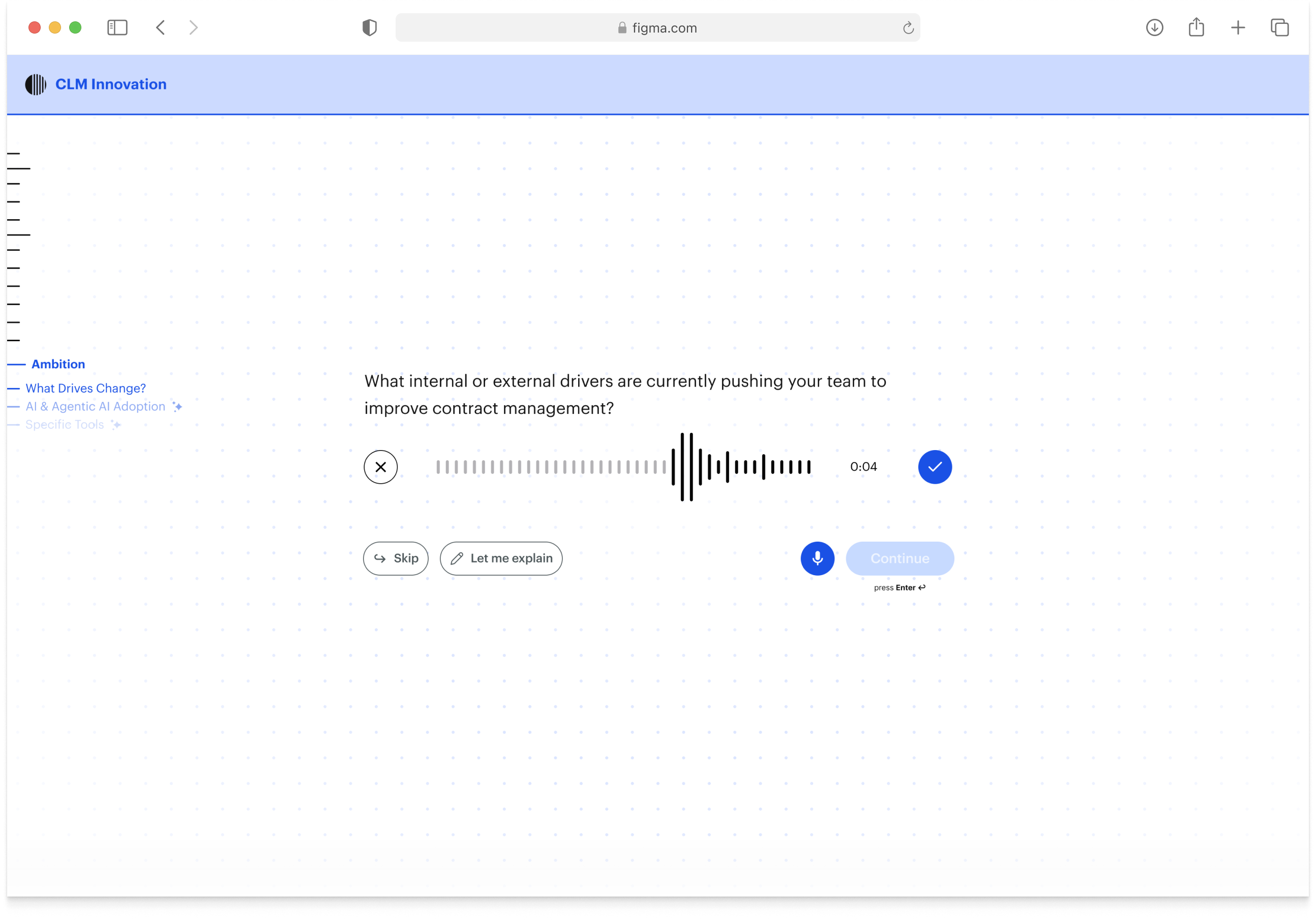

I was the interaction designer on this. I designed the full survey experience: the intent-based question architecture, the onboarding flow, the progress navigation, and the chapter summary moments. The survey runs on a set of intents clustered into topics and chapters — the LLM decides when an intent is satisfied and generates the next question from what the person has already said. Every session looks different.

Process

My focus was the interaction layer: I worked directly with the frontend developer while Natalia handled stakeholder management, content design, and the prompting side with the engineering team. We ran a workshop with design to map out the product vision, which is where the deeper question surfaced: how do you make the AI's thinking genuinely visible, not just legible?

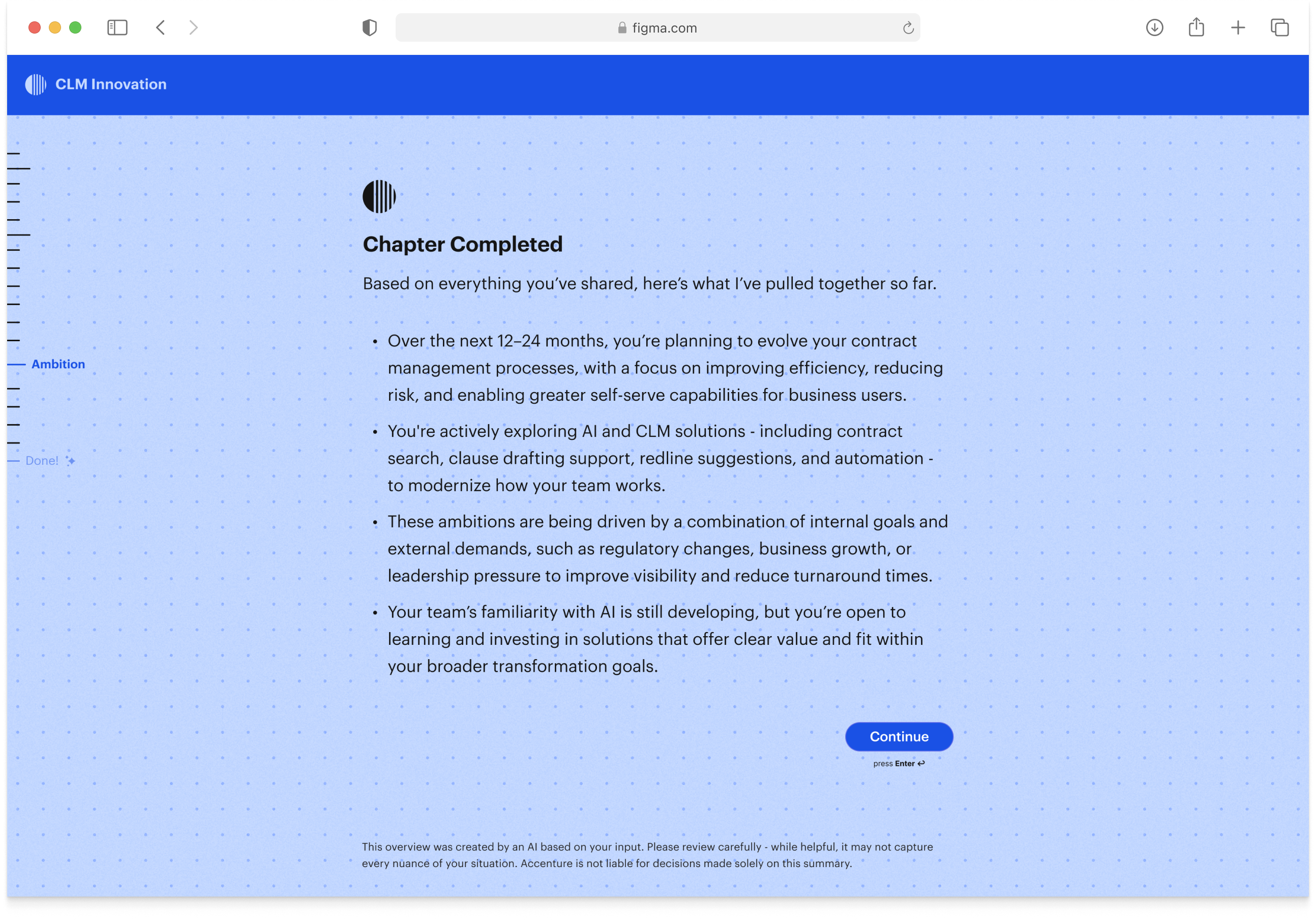

I explored three approaches to that problem. First, an artefact that updated live after every question — too complex. Then separate artefacts after each block — still too much. We landed on chapter summaries: the AI synthesising what it had learned before moving on. I also worked with a visual designer on a concept for the background layer: nodes that connect and shift depending on where the assessment is heading, showing the AI's thinking spatially rather than textually.

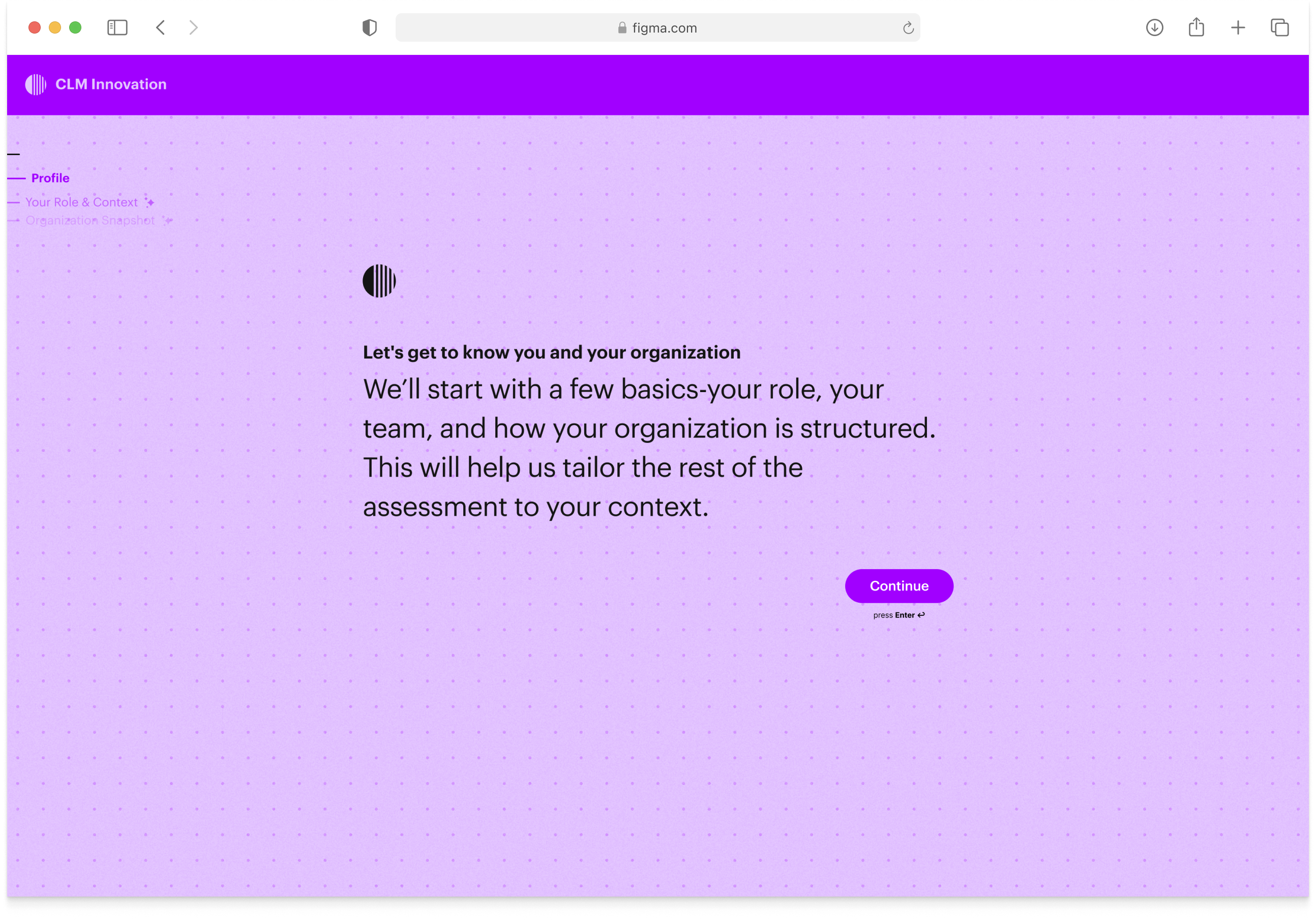

For navigation, I designed a progress sidebar that showed topics rather than time (we had no idea how long any given session would take). What's coming next appeared at low opacity and sharpened as you approached it. If an answer fed back into an earlier topic, that topic would be re-emphasised. The survey itself scrolled question by question, open and wide — more conversation than chat interface. With a detailed intro page upfront, because none of this works if the first question catches someone off guard.

Outcome

518 people have completed the assessment across five campaigns with five different clients. What started as a business development tool has become a repeatable platform, picked up by different teams for different purposes without needing to rebuild it each time.